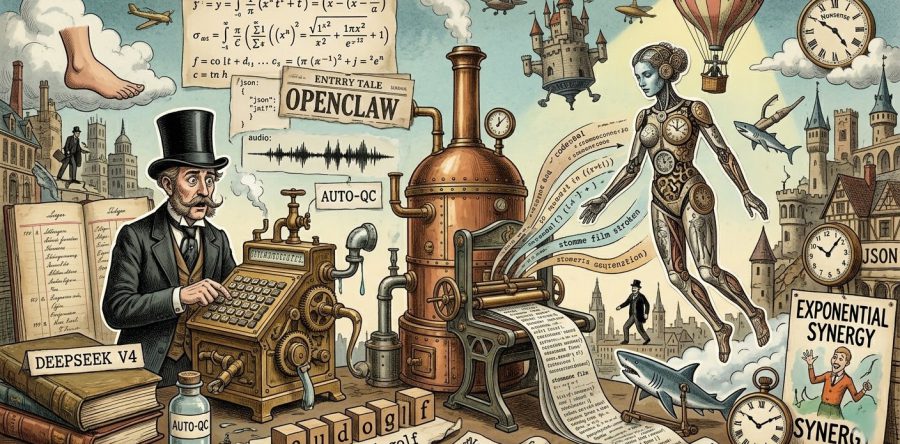

In the world of AI development, there is a big difference between 'fun experiments' and 'productive harmony'. In my search for the ideal digital companion for a complex creative process – from long videos to audio and images – I embarked on an adventure with OpenClaw. An adventure that led me along the edges of the cloud, until I found the key with a new player: DeepSeek.

The Start: Harmony with Route-LLM

For everyone who wants to embark on the OpenClaw adventure, there is one piece of advice: start with Route-LLM. It worked perfectly for me for a long time. The beauty of this system (via Abacus AI) is its versatility; all top models are ready for you and the system switches automatically based on your query. It is the ideal "entry-level harmony" for a creative process in which you don't want to constantly tinker with the back end yourself.

The Wall: Context and Costly Tokens

But those who build big run into big limits. My project – orchestrating a complete pipeline for long videos – requires my agent to have an enormous memory. OpenClaw must constantly send along large amounts of documentation and history.

Here I ran into two major problems:

- The "Forgetfulness": The standard models fell short on context memory for the complex structures I was building.

- The Token Bill: Because there was no caching, I paid for the full set of instructions and documents again with every single query.

I tried Gemini via Google Studio. Although Gemini can cache fantastically, I noticed that Google's API blocks an agent workflow (like OpenClaw) fairly quickly. Something that never happened with Route-LLM, but there I was missing the much-needed efficiency.

The Revolution: DeepSeek V4 (April 2026)

Around April 25, 2026, something happened that caught the entire AI community off guard: the release of the latest DeepSeek model. A lot is claimed in the media, but in my world the rule is: the proof is in the numbers.

The first results of my tests are spectacular:

- Smart Caching: DeepSeek understands that my "Tool Docs" don't change. Thanks to their Prefix Caching, I now pay up to 90% less for the tokens my documentation takes up. In recent logs I saw a Cache Hit ratio of no less than 85%!

- Agent-friendly: Where other major players put up barriers for agents, DeepSeek gives free rein.

- Better Context Retention: The model flawlessly maintains the thread of the creative process, even with enormous files.

The Next Step: The Intelligent Recovery Loop

Now that the infrastructure is in place, the real adventure can begin. I have developed tools that allow OpenClaw to check the quality of a video through specific mathematical calculations for image, audio and speech. In essence, this toolkit is a smart agent flow that collectively renders a final verdict on the work produced.

Auto-QC: Self-Correcting Capability

When the sensors detect an error, an automatic recovery loop kicks in that makes human intervention unnecessary:

- Detection: The QC agent marks a scene as "failed" (e.g. due to an incorrect balance in the music).

- Analysis & Re-prompting: The AI agent analyzes the error and rewrites the instructions with extra emphasis (e.g. "no music, raw ambient only").

- Reproduction: A new version is generated and immediately re-scanned by the mathematical sensors.

- Validation: This process repeats itself (up to a maximum of 6 times) until quality is guaranteed.

In short: Auto-QC is a tireless, intelligent orchestration that not only checks videos, but autonomously improves them until they meet the standard. Only when the machine truly cannot resolve it after six attempts is human assistance called in.

The OpenClaw adventure continues, but without the fear of an 'empty' memory or an unaffordable token bill. The machine learns, corrects and creates – in complete harmony.